Export data from Cloud SQL to GCS using BigQuery Federated Query and Scheduled Query

Recently, I worked with a client who wanted to export data from Cloud SQL to GCS daily. They tried Cloud SQL Serverless Export orchestrated by Cloud Composer but found it is pretty expensive for their use case. For the context, with Serverless Export, Cloud SQL creates a separate, temporary instance to offload the export operation. It is expensive because of the extra resource provision.

In this blog post, I’ll show you how to export data from Cloud SQL Postgres (This solution works for MySQL too) database to GCS using BigQuery federated query and its own Scheduled Query feature.

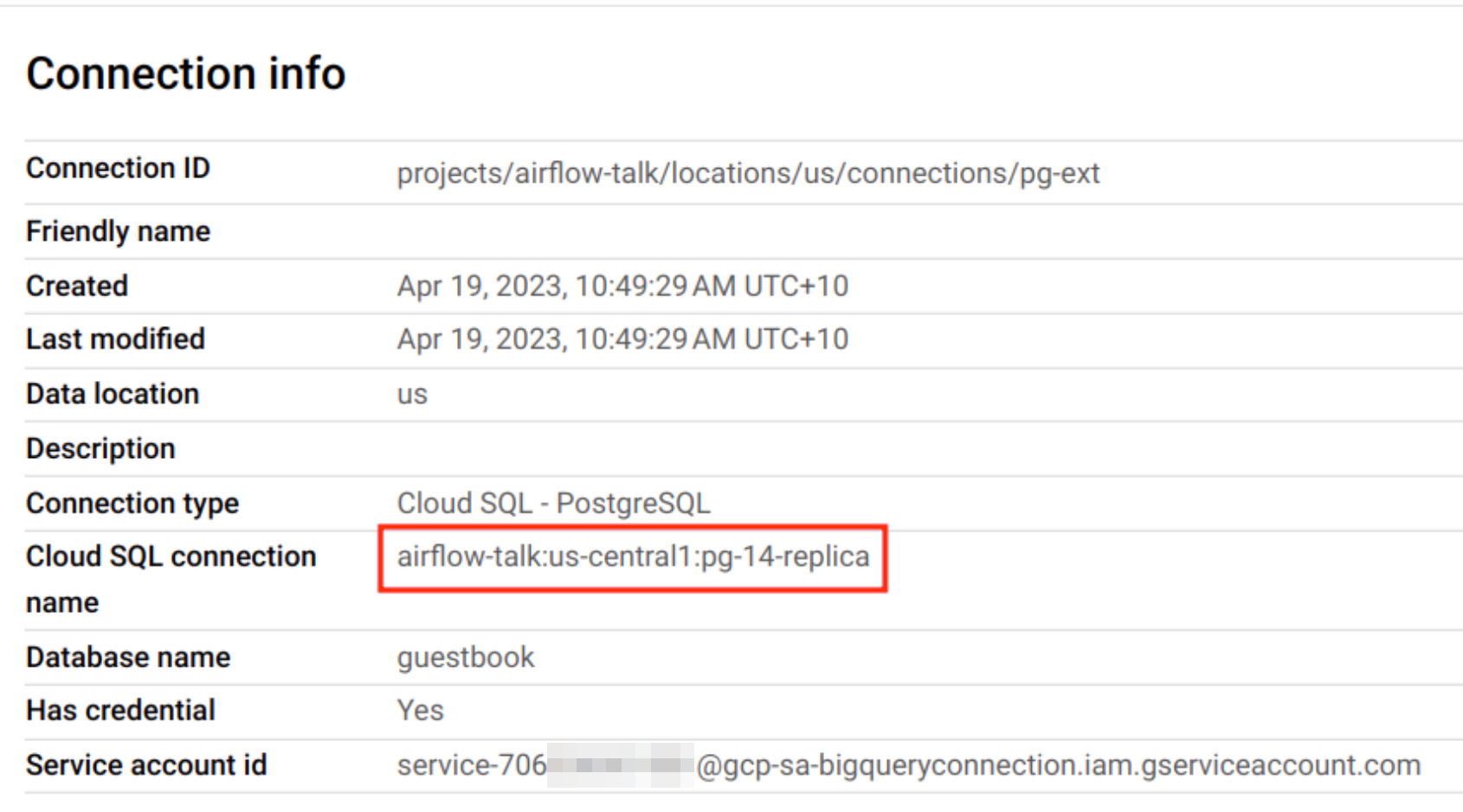

Step 1: Set up the Cloud SQL connection in BigQuery following this document. To avoid impacting the performance of main instance, I used a PostgreSQL read replica.

Step 2: Create a GCS bucket.

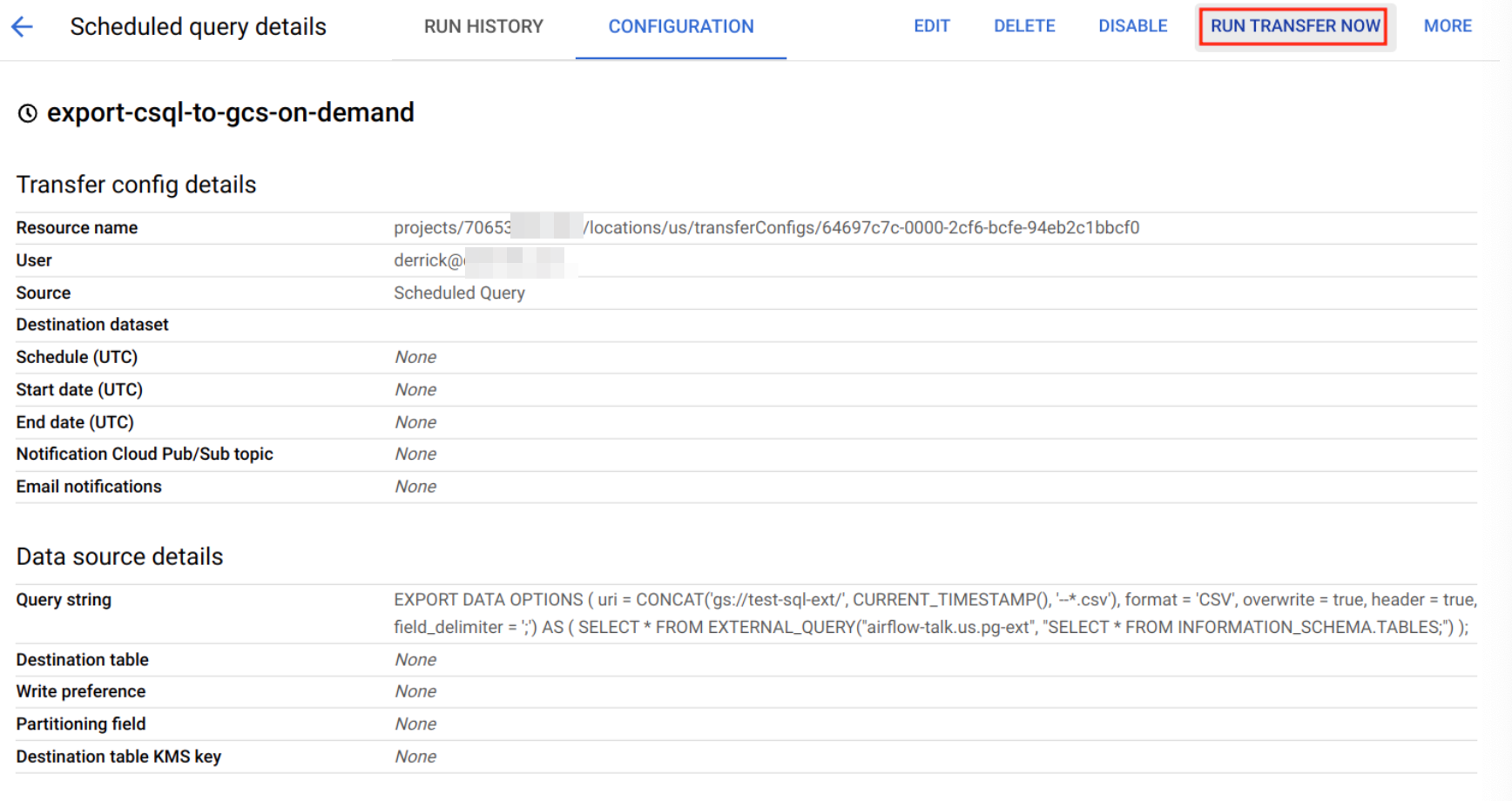

Step 3: Use the below SQL query to create a Scheduled Query following this document. For testing purpose, I created an on-demand job so that I can trigger it.

Note that in this SQL, I used BigQuery's EXPORT DATA statement, which can export query result directly to GCS.

EXPORT DATA

OPTIONS (

uri = CONCAT('gs://test-sql-ext/', CURRENT_TIMESTAMP(), '--*.csv'),

format = 'CSV',

overwrite = true,

header = true,

field_delimiter = ';')

AS (

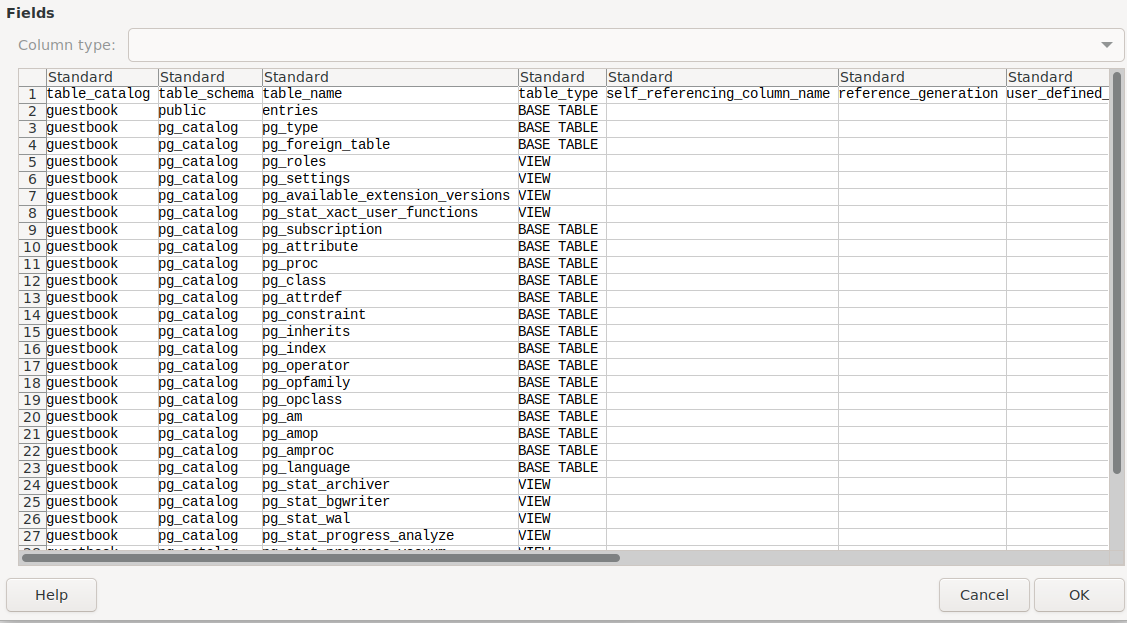

SELECT * FROM EXTERNAL_QUERY("airflow-talk.us.pg-ext", "SELECT * FROM INFORMATION_SCHEMA.TABLES;")

);

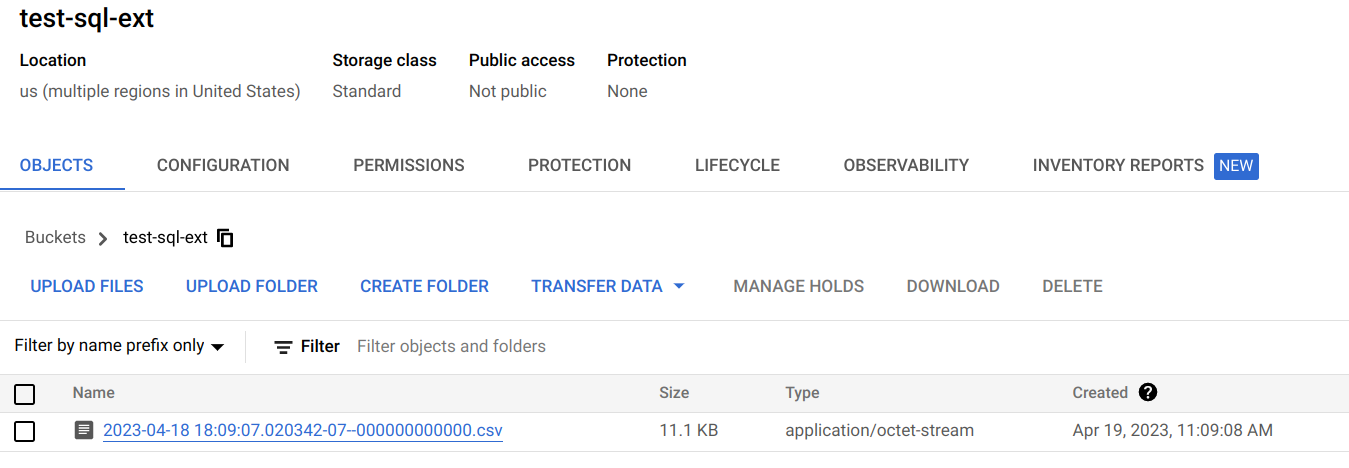

Step 4: Trigger the job and check the GCS file.

Step 5: verify the CSV file. It contains the data extracted the SQL query.

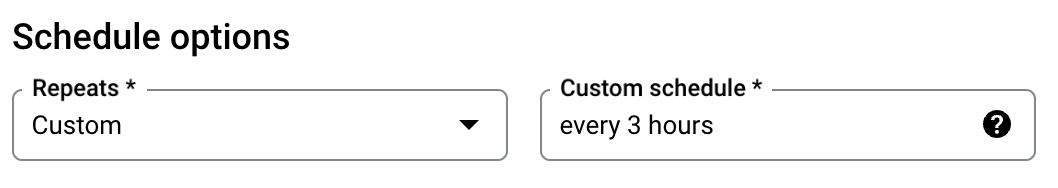

You can schedule the query to run. This part of the document talks about how you can set up your schedule. You can set up the schedule using the console or bq CLI. On the console, to specify a custom frequency, select Custom, then enter a Cron-like time specification in the Custom schedule field— for example, every mon 23:30 or every 6 hours .

That's it! We built a data pipeline that can extract data from Cloud SQL to GCS on a schedule. We didn't use Serverless export. The cost of the solution is much cheaper.